How Does an AI SDR Qualify Visitors in Real Time?

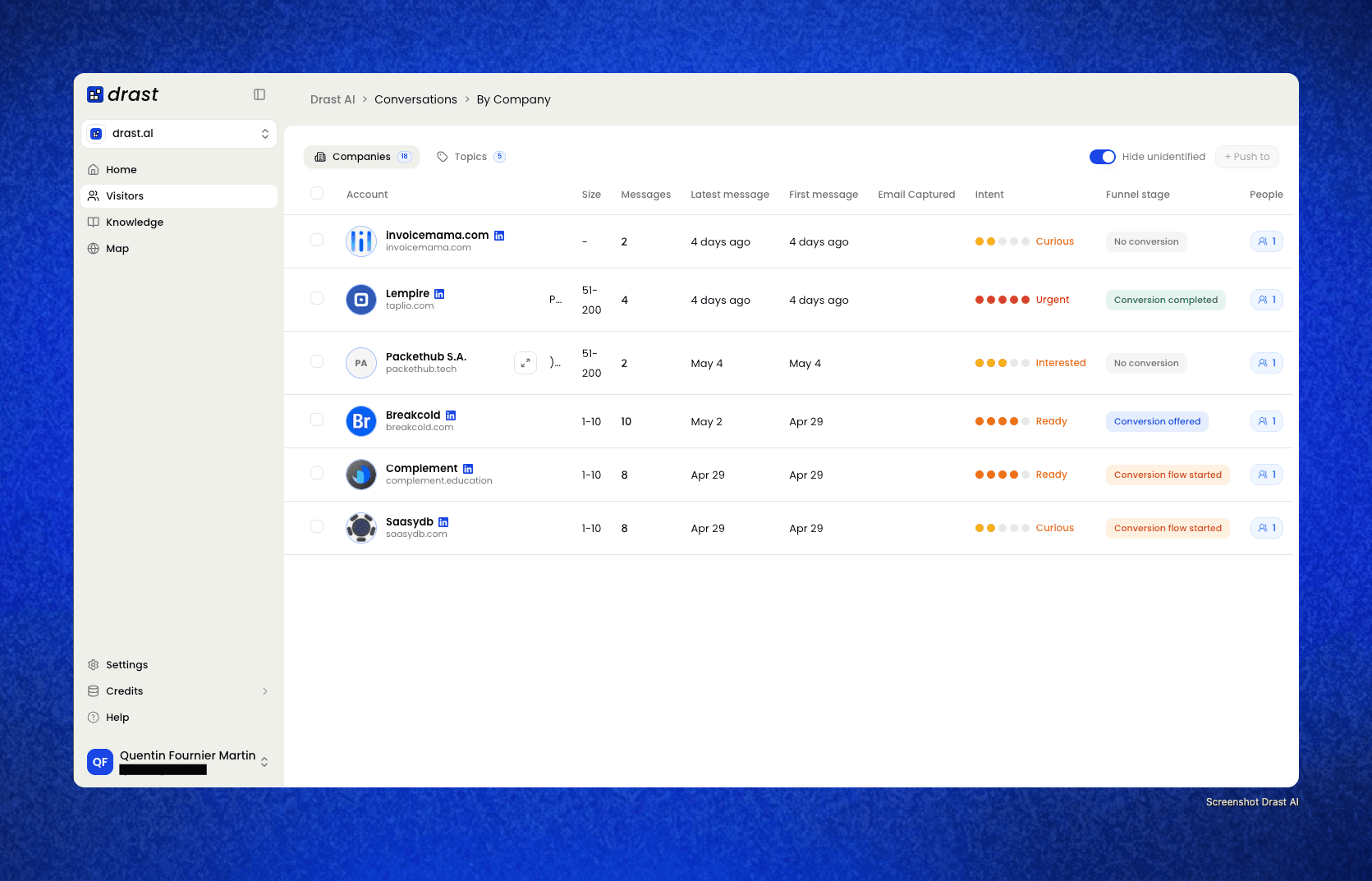

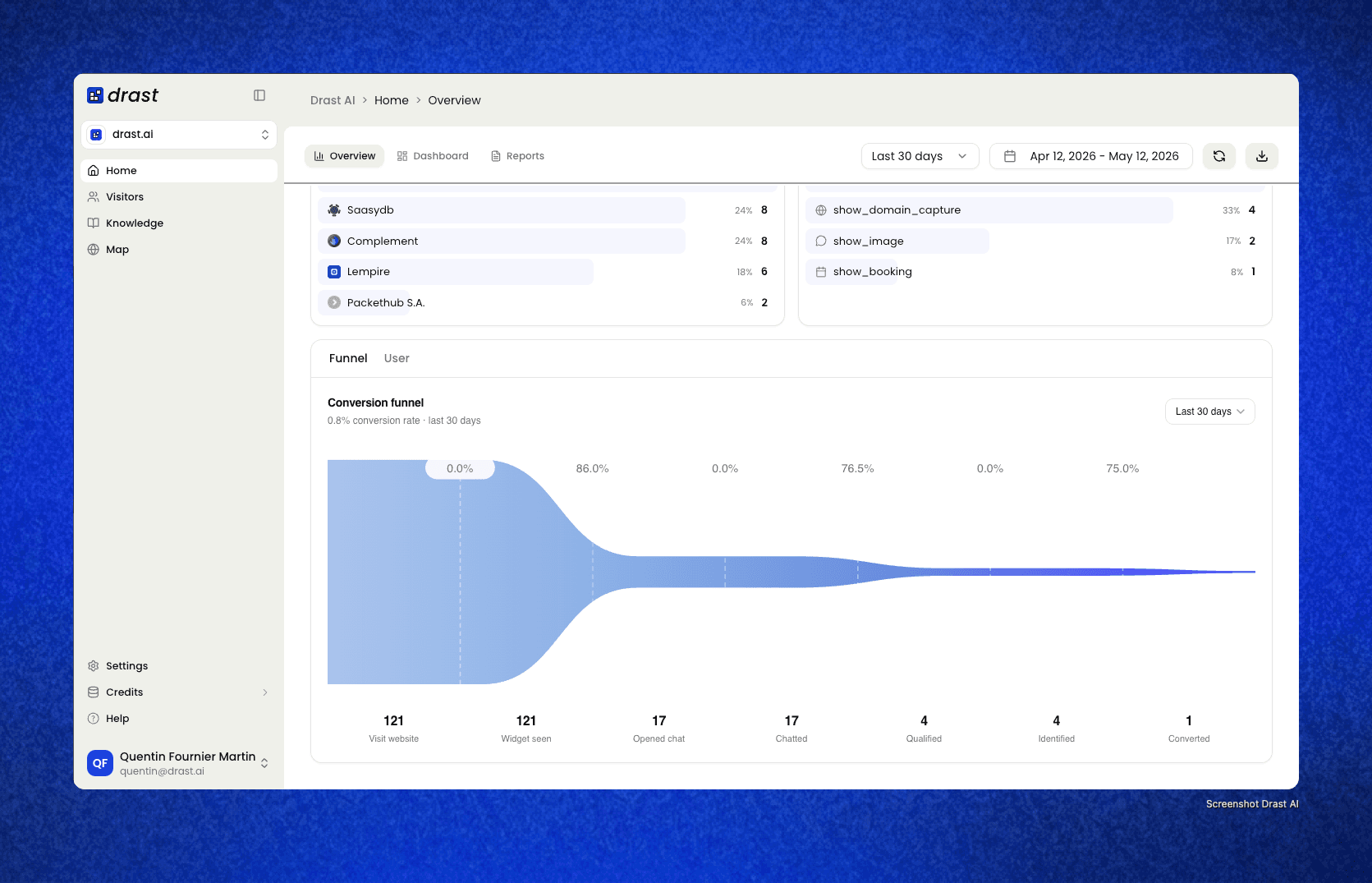

In the time it takes to read this paragraph, Drast has identified an anonymous visitor on a B2B SaaS website, scored them against the company's ICP, decided whether they're worth a meeting, and either booked one or routed them to nurture.

Total elapsed time: under 3 seconds.

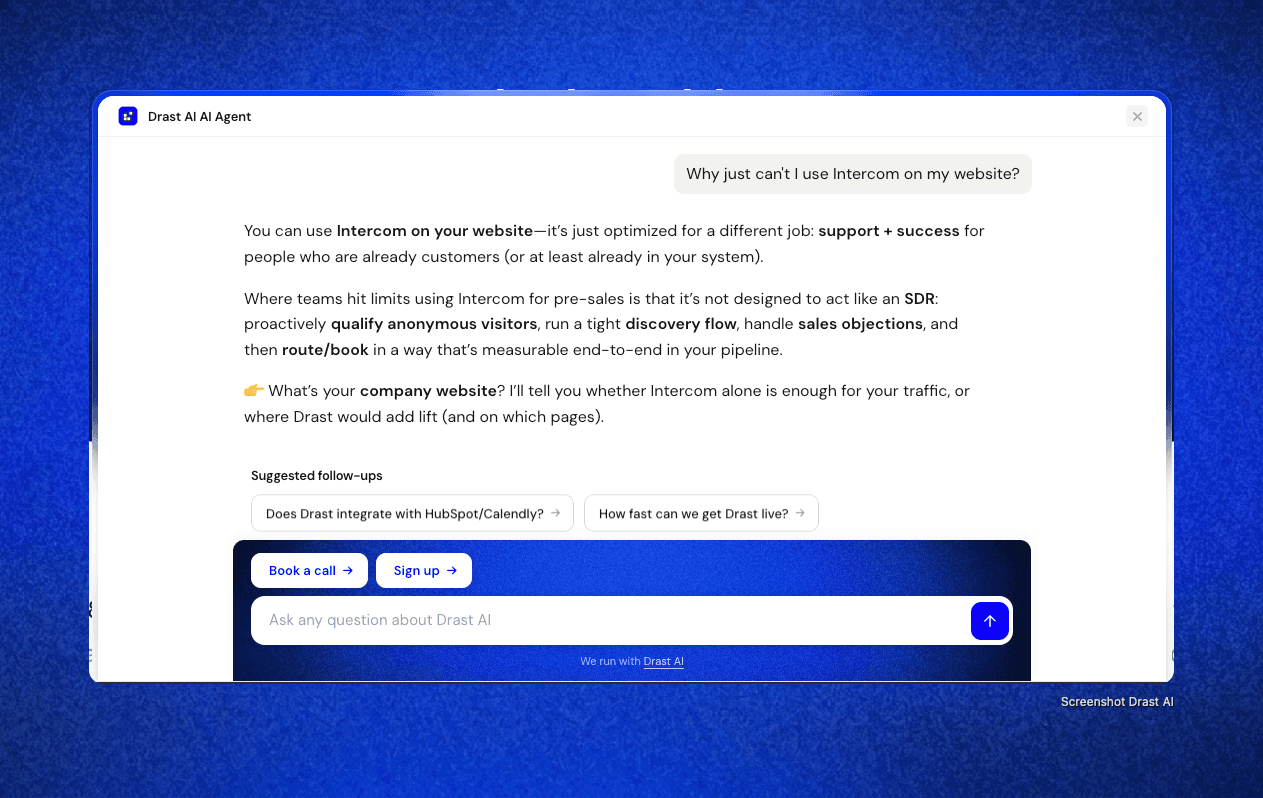

That's the gap between "AI SDR" and every chatbot that came before. A chatbot answers questions. An AI SDR runs a real sales motion — and the qualification step is where most products fail. They either interrogate the visitor ("What's your budget? Are you the decision-maker?") and watch them close the tab, or they skip qualification entirely and dump every conversation on the AE's calendar.

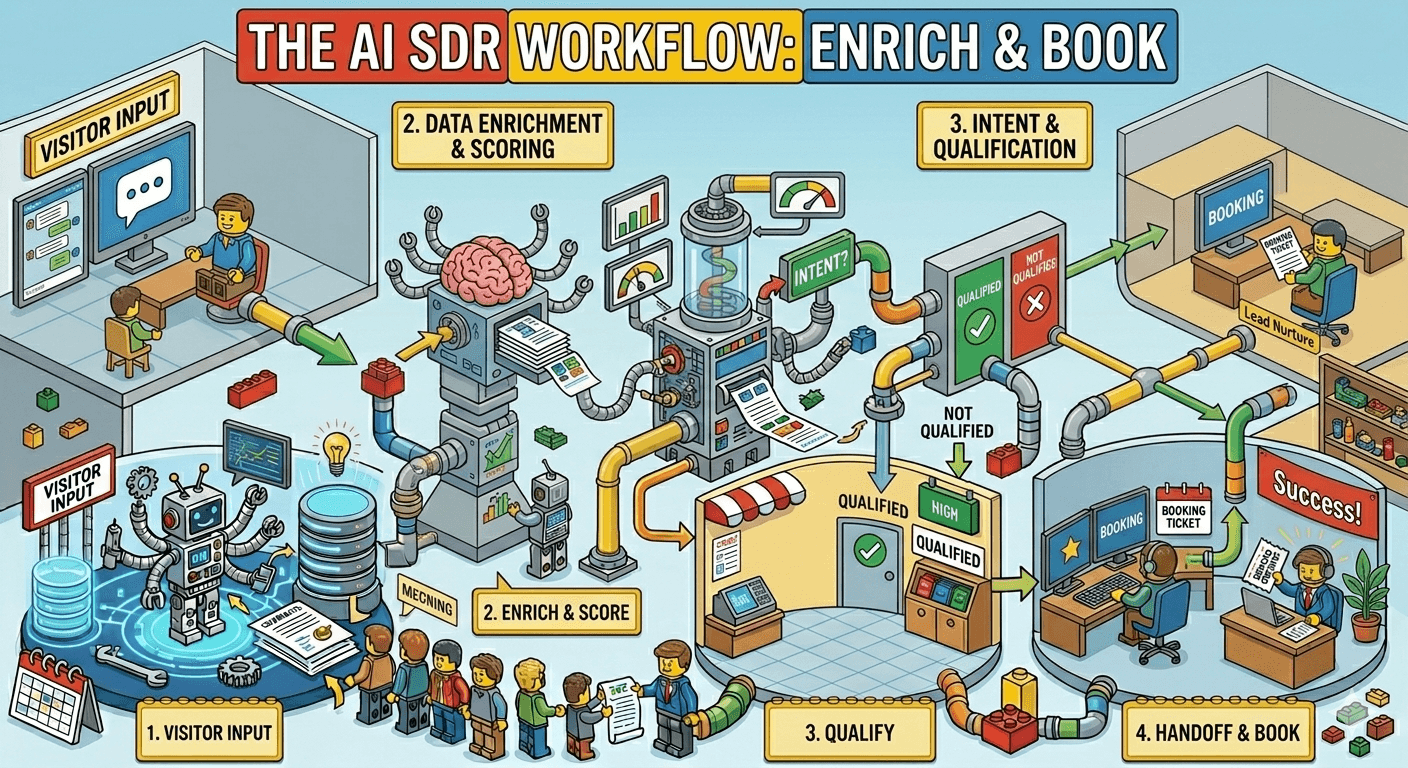

This is a walkthrough of how Drast actually qualifies visitors in real time. Five stages, all happening silently in the background while the visitor has what feels like a normal conversation. By the end of this article, you'll see exactly what's happening under the hood — and why most AI SDRs get this wrong.

TL;DR:

Drast qualifies anonymous visitors in real time across 5 stages: identification, scoring, objection handling, intent confirmation, and routing.

Identification runs in the first 200ms — reverse-IP + enrichment APIs identify ~45% of US B2B traffic before the first message.

Qualification is silent. The visitor never sees the scorecard. No "what's your budget?" interrogations.

Drast scores against your custom ICP scorecard (BANT, MEDDICC, or fully custom criteria you configure).

Qualified visitors get booked directly in-chat. Unqualified visitors get a graceful nurture path. AEs only see the meetings worth their time.

[IMAGE: Drast dashboard hero shot — visitor identified as "Acme Corp, VP of Marketing, Series B SaaS, 80 employees" with a green qualification badge. Use a real screenshot from your app.]

The qualification problem (and why most AI SDRs get it wrong)

Before walking through how Drast does it, let's name what most products get wrong.

Mistake 1: They ask qualification questions directly.

Visitor lands on the pricing page. Bot pops up. Message 1: "Hi! How can I help?" Message 2: "What's your company size?" Message 3: "What's your budget?" The visitor closes the tab in message 2 because they came to learn about a product, not fill out a slow-motion form.

Mistake 2: They skip qualification entirely.

Other products go the opposite direction — they let everyone book a meeting, no filtering. The AE opens their calendar Monday morning to find 12 meetings booked over the weekend by students, job seekers, and competitors doing research. AE trust in the tool collapses in two weeks.

Mistake 3: They use vendor-default qualification.

The bot ships with generic logic — "qualified = company size > 10." That's not your ICP. Drast lets you configure the scorecard against your real ICP, with weights you tune over time.

Drast's approach: qualify silently, against your actual ICP, in real time, with the visitor never realizing it's happening.

Stage 1: Identification (the first 200ms)

The moment a visitor lands on a page where Drast is deployed, identification kicks in.

Three sources run in parallel:

Reverse-IP lookup — maps the visitor's IP to a company. Effective for ~30-40% of US B2B traffic.

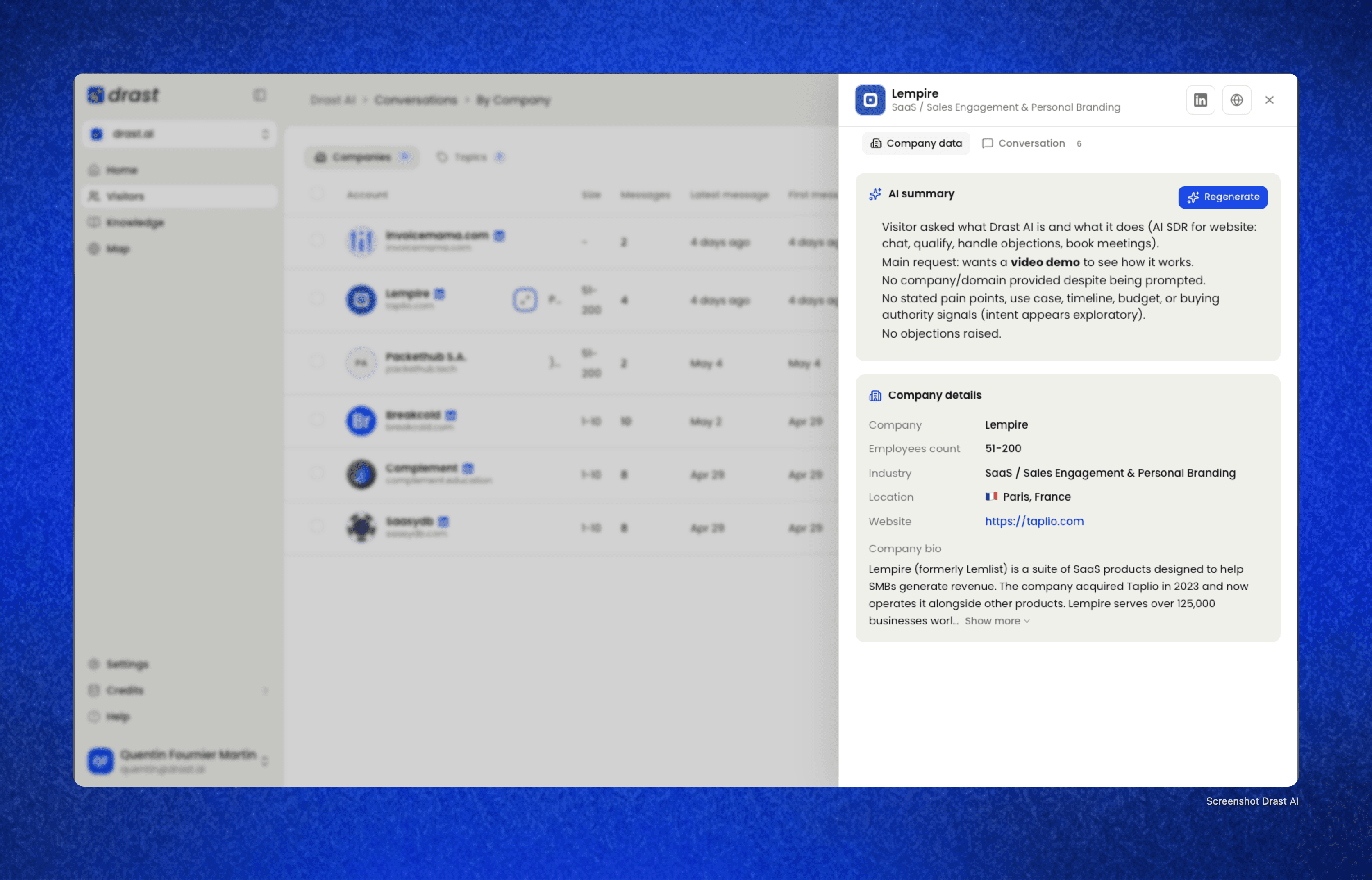

Domain enrichment — once the company is identified, Drast pulls industry, headcount, revenue range, tech stack, funding stage, and location from enrichment APIs.

Behavioral context — what page they landed on, what referrer brought them, whether they're a returning visitor.

Total combined identification rate on US B2B traffic: ~45%. Higher than industry standard because Drast layers multiple identification methods instead of relying on reverse-IP alone.

For the 55% of visitors Drast can't identify upfront, it doesn't give up — the conversation itself becomes part of the identification. If the visitor mentions their company, role, or what they're evaluating, Drast captures that and enriches in real time.

Why this matters for qualification: every qualification decision Drast makes downstream depends on this layer. Without identification, the bot is qualifying blind — guessing based on conversational signals alone. That's why Drast invests so heavily in this first stage: the quality of every other stage compounds from here.

Stage 2: Silent scoring against your ICP

This is where Drast diverges hardest from generic chatbots.

The visitor has no idea qualification is happening. They see a normal conversation. Behind the scenes, Drast is running a configurable scorecard you set up at deployment.

The scorecard takes any criteria you define:

Company size (range you specify)

Industry (whitelist or blacklist)

Tech stack (specific tools that signal fit)

Revenue range

Funding stage

Geography (country, region, time zone)

Role (seniority, function, decision-making influence)

Intent signals (pages visited, time on site, return visits)

Existing pipeline status (from your CRM)

Each criterion gets a weight you control. Maybe your ICP cares more about tech stack than headcount because you only convert teams already using HubSpot. Drast lets you weight that signal 3x more than other criteria.

The scorecard outputs a single decision:

Qualified → book meeting (route directly to in-chat calendar)

Marginal → continue conversation, deepen qualification

Unqualified → graceful nurture path (newsletter signup, content offer, no meeting)

Visitors never see the scorecard. They never see the score. They just see a useful, contextual conversation. That's the entire trick.

Stage 3: Real-time objection handling

Qualification isn't a one-shot decision — it's a conversation. Visitors raise objections that change the qualification picture.

A visitor who objects "you're too expensive" might be:

A real prospect who needs ROI math

A junior researcher killing time

A competitor doing recon

A startup founder who'll convert at a lower tier

Drast's job is to handle the objection in a way that both moves the conversation forward and surfaces signal that updates the score.

Drast's objection library is populated from your sales team's actual call recordings. Not vendor defaults. Not generic "let me connect you with sales" punts. Real responses from your top AEs, made reproducible.

The library covers the standard B2B objections:

Pricing ("too expensive," "what does it cost," "free tier?")

Competitors ("we already use X," "how are you different from Y")

Security ("SOC 2," "GDPR," "data residency")

Integrations ("does this work with HubSpot, Salesforce, Slack")

Timing ("not ready," "evaluating next quarter")

Authority ("not the decision-maker")

Implementation ("how long to deploy," "how much effort")

Each response has a point of view. When a visitor says "you're too expensive," Drast doesn't say "let me connect you with sales." It anchors against competitor pricing, surfaces ROI math, and asks a follow-up question that either qualifies harder or unblocks the next step.

Stage 4: Intent confirmation

Identification + scoring tells Drast who the visitor is. Intent confirmation tells Drast what they actually want.

A VP of Marketing at a perfect-fit company can still be:

Doing competitor research with no buying intent

Curious but not ready

Comparing options for a Q3 budget cycle

Actively evaluating to buy this week

Drast detects intent through three layers:

Page-level intent — visitors on pricing, demo request, or competitor comparison pages signal higher intent than visitors on the homepage or blog.

Conversational intent — questions like "how do I get started," "what's the implementation timeline," and "can I see a demo?" are buying-stage signals. Questions like "what's the difference between you and X?" are evaluation-stage signals.

Session intent — total time on site, pages visited in this session, whether this is a return visit, and whether they came from a high-intent referrer (G2, comparison searches, branded queries).

The intent layer is what separates Drast from "chatbots that book everyone." A visitor with great fit but tire-kicker intent gets routed to nurture, not to a meeting. A visitor with marginal fit but high intent (active evaluation, urgent timeline) might still be worth a meeting depending on your scorecard rules.

Stage 5: Routing and handoff

Qualification ends with a routing decision. Drast supports four outcomes:

Direct meeting booking — visitor commits to a calendar slot in-chat. Drast handles calendar API, time zones, AE assignment, and confirmation flow.

Routed live chat handoff — for enterprise-flagged accounts (Fortune 1000, high deal-size signals), Drast pings a human rep in Slack with full context. Human takes over the conversation in real time.

Async nurture — visitor isn't qualified for a meeting today but is worth nurturing. Drast captures email, offers content, adds to the appropriate sequence.

Polite decline — clearly outside ICP. Drast offers self-serve resources or partner referrals. No meeting, no nurture, no wasted AE time.

For booked meetings, the AE receives a Slack DM with a full context pack:

Company details (name, logo, industry, size, revenue range, funding, tech stack)

Contact info (role, seniority, LinkedIn link)

Session intent (pages visited, time on site, referrer, return visits)

Conversation transcript (full exchange, with objections and key questions highlighted)

Qualification reasoning (which scorecard criteria were met, score, why this lead was routed to a meeting)

Recommended approach (talk track suggestions, likely objections, competitive landscape)

The AE walks into the meeting knowing more than the prospect expects them to know. That's not a luxury — it's table stakes for inbound conversion in 2026.

How Drast's qualification compares to alternatives

Quick reference for how Drast's approach differs from common alternatives.

Approach | Identification | Qualification Method | Visitor Experience | Output |

|---|---|---|---|---|

Form + SDR follow-up | Self-reported only | Manual SDR review (4-24h delay) | Fill form, wait | Often-cold meeting |

Generic chatbot | None or basic | Direct interrogation ("budget?") | Annoyed, often bounces | Random meetings |

Live chat (humans) | Whatever the rep can pull | Human judgment in real time | High-friction but personal | Best conversion, doesn't scale |

Drast (AI SDR) | Reverse-IP + enrichment + behavioral (~45%) | Silent scorecard, real-time | Natural conversation | Qualified meetings + context pack |

The Drast approach captures the speed and scale of automation while preserving the qualification rigor of a senior SDR. Not the qualification rigor of "a bot that books everyone."

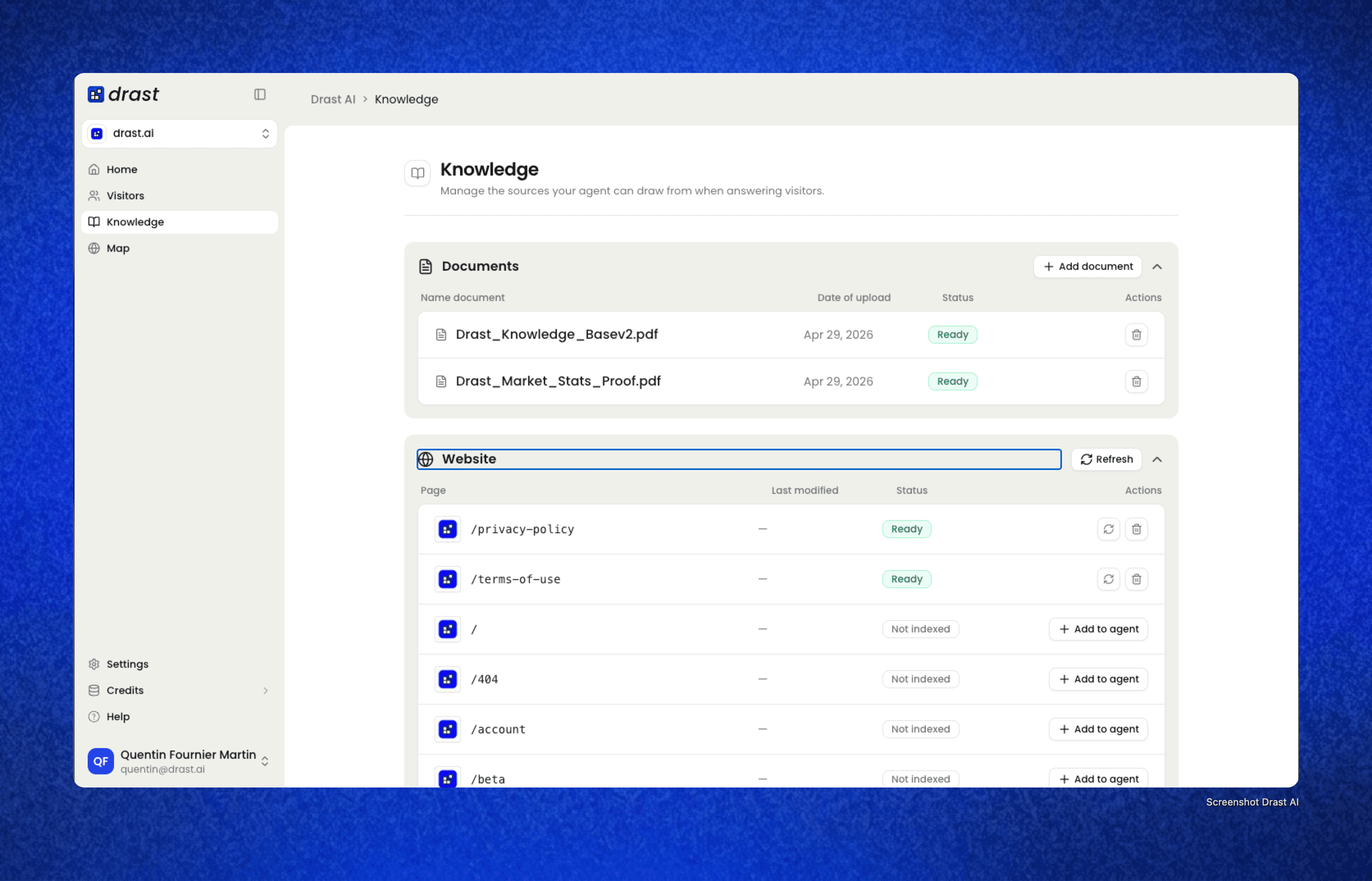

What you configure when you deploy Drast

The work isn't the AI. The AI is solved. The work is configuring the qualification logic against your specific ICP. When you deploy Drast, here's what gets set up:

ICP scorecard — your custom criteria, weighted, with a threshold for "qualified." Built with your sales team, not vendor defaults.

Objection library — populated from your actual sales call recordings. Drast handles the import; you review and edit.

Routing rules — which qualification outcomes go to which AE, which trigger live chat handoff, which go to nurture.

Calendar integration — Google Calendar, Outlook, or Calendly, with AE-level routing logic.

CRM sync — Salesforce or HubSpot, with bidirectional updates so Drast knows account history and your CRM stays clean.

Slack handoff — context pack format, which channels get notified, who gets DMed when.

Standard deployment timeline: 5-10 business days from contract signature to live qualification. Most of that is configuring the scorecard and importing objection responses, not technical setup.

When real-time visitor qualification fails (and how to fix it)

Three failure modes to watch for, even with Drast deployed.

Failure 1: Scorecard drift. Your ICP changes. New segments emerge. Your top performers tell you the bot is qualifying meetings that don't close. Fix: review the scorecard monthly. Pull AE feedback on every booked meeting (qualified yes/no), update weights based on what's actually predicting closed-won.

Failure 2: Objection library staleness. Your competitive landscape shifts. New competitors emerge, pricing changes, security questions get more sophisticated. Fix: review and update the objection library quarterly. Pull from recent call recordings, not the ones from six months ago.

Failure 3: Handoff quality erosion. AEs stop reading the context packs because something in the format isn't useful. They show up cold to meetings even though Drast did its job. Fix: AE feedback loop. Quarterly survey on context pack quality, iterate on what they actually use vs ignore.

Drast's qualification system is good on day one. It's great after three months of tuning. The teams that get the most value treat it as a continuously-improving system, not a set-and-forget product.

Frequently Asked Questions

How does an AI SDR qualify visitors in real time?

An AI SDR like Drast qualifies visitors through five stages running in parallel: identification (reverse-IP and enrichment APIs identify the company within 200ms), silent scoring (against a configurable ICP scorecard), real-time objection handling (responses with a point of view, not punts to humans), intent confirmation (page-level, conversational, and session signals), and routing (direct meeting booking, live chat handoff, nurture, or polite decline). The visitor never sees the qualification logic — they just experience a useful conversation.

What's the difference between AI SDR qualification and chatbot qualification?

A chatbot doesn't qualify — it answers questions. An AI SDR runs a full qualification motion against your ICP scorecard. The key differences: AI SDRs identify anonymous visitors via enrichment APIs (chatbots don't), score conversations silently against custom criteria (chatbots don't have scorecards), handle objections with a point of view (chatbots punt to humans), and route to outcomes including direct meeting booking (chatbots typically can't book autonomously).

How accurate is real-time visitor qualification?

Drast's identification rate sits around 45% on US B2B traffic — meaning the bot has full company-level context for 45% of anonymous visitors before the first message. Qualification accuracy (% of booked meetings that AEs confirm as genuinely qualified) reaches 85%+ after 4-6 weeks of scorecard tuning. The system keeps improving as it learns from AE feedback on which booked meetings actually closed.

Does Drast ask visitors qualification questions directly?

No. Direct interrogation ("what's your budget?", "what's your company size?") kills conversation rates. Drast qualifies silently in the background using identification data, behavioral signals, and conversational signals. The visitor experiences a natural, helpful conversation while the scorecard runs invisibly. They only see the qualification outcome when Drast routes them — to a calendar booking, a nurture path, or a content offer.

Can I customize Drast's qualification logic?

Yes — full ICP scorecard customization is core to Drast. You configure the criteria (company size, industry, tech stack, role, intent signals, etc.), the weights, and the qualification threshold. Vendor-default scorecards are useless because they don't know your business. Drast's setup process is built around configuring this with your sales team using real closed-won data, not generic templates.

How does Drast handle visitors it can't identify?

For the ~55% of visitors Drast can't identify via reverse-IP and enrichment, qualification continues based on conversational signals. If the visitor mentions their company, role, or what they're evaluating, Drast captures that data and enriches in real time. If they remain fully anonymous, Drast qualifies based on intent signals (pages visited, time on site) and conversational quality alone. Anonymous-but-high-intent visitors can still be routed to meetings if they pass the scorecard threshold.

What happens after Drast qualifies a visitor?

Four possible outcomes: (1) Qualified visitor books a meeting directly in-chat, with calendar integration and AE routing. (2) Enterprise-flagged accounts get routed to a live human rep via Slack handoff. (3) Marginal-fit visitors enter a nurture path (email capture, content offer, sequence enrollment). (4) Out-of-ICP visitors get a polite decline with self-serve resources. The AE only sees the meetings worth their time, with full context packs delivered before the conversation.

How quickly does Drast qualify a visitor?

Identification completes within 200ms of page load. Initial qualification scoring updates with every conversational turn (typically every 5-15 seconds). The full qualification cycle — from anonymous visitor to either booked meeting or routed nurture — typically completes within 60-120 seconds of the visitor engaging. Compare this to traditional form-then-SDR-follow-up cycles that take 4-24 hours, often missing the visitor entirely.

See Drast qualify visitors live

The best way to understand how this works is to see it on your own site. Book a demo with Drast — we'll walk through the qualification flow with one of your real anonymous visitors. See our video demo here